The Automation Debate Is Consuming the Wrong Conversation

The question on everyone's mind right now is the same one: "Will AI replace our people?" It's understandable. The headlines are loud. The anxiety is real. But Anthropic's most recent Economic Index report, released this month, tells a different story than the one dominating most boardroom conversations.

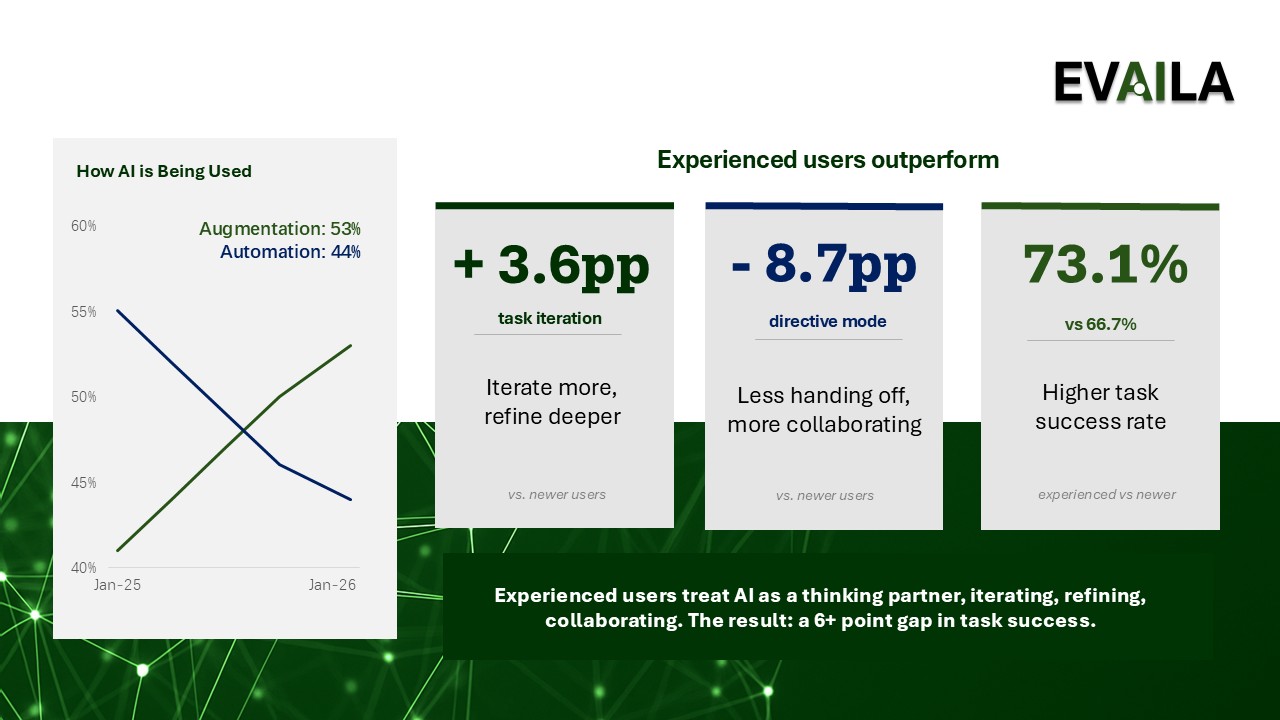

Augmentation, where AI collaborates with and complements human work, continues to grow in real-world usage, while full automation is declining. This isn't a philosophical statement. It's observed behavior, drawn from a sample of one million conversations across both consumer and API usage. Users are bringing AI into their work as a thinking partner, a drafting tool, a sounding board. They're iterating with it, not handing off to it.

That distinction matters more than most leaders realize.

What Augmentation Actually Looks Like in Practice

Augmentation isn't a soft concept. It has a specific shape in the data. It shows up as feedback loops: a user and an AI working back and forth toward a better output. It shows up as task iteration, where the human sets direction and the AI executes and refines. It shows up as learning, where people use AI to build their own understanding, not just to produce a deliverable faster.

What it is not: a user typing a prompt, getting an answer, and walking away. That pattern , full delegation, exists, but it's the minority.

The implication is significant. If your workforce is augmenting rather than automating, then the variable that determines how much value they extract from AI is not the tool — it's the person using it. Their ability to frame a problem, evaluate AI output critically, and iterate toward something useful. These are human skills. And most organizations have not invested in them.

Evaila lens: This is exactly why we build AI adoption programs around the person first. The technology is accessible. The capability to use it well is not. We see organizations deploy tools broadly, then wonder why adoption plateaus. The gap is almost always a skills and confidence gap, not a technology gap.

Augmentation Rewards Experience... Which Changes Your Rollout Strategy

The report also shows that experienced AI users bring more complex, higher-value work to the tool, and achieve meaningfully higher success rates in their conversations. High-tenure users are more likely to iterate rather than delegate, and they bring work that is more sophisticated and harder to automate. The more someone learns to work with AI, the more value they extract from it.

This runs counter to how many organizations sequence their AI rollouts. A lot of enterprise AI programs start broad, pushing tools to every team at once, running a company-wide training, and measuring adoption by license utilization. That's a coverage strategy, not a value strategy. If the biggest gains from augmentation come where experience and expertise are deepest, then your highest-impact early investments are with the roles where work is most complex, where AI has the most room to operate alongside deep expertise.

Start there. Build fluency. Document what good augmentation looks like in those roles. Then use that as the playbook for scaling.

The Organizational Gap That Augmentation Exposes

There's a harder truth inside the augmentation data. If AI is primarily a collaborator rather than a replacement, then the quality of human judgment remains central. Bad judgment, augmented by AI, scales faster. Unclear goals, supported by AI, generate more polished confusion. The organizational conditions that made human work effective, clear ownership, well-defined outcomes, good decision frameworks, matter just as much in an AI-augmented environment, maybe more.

This is where many organizations are underinvesting. They're focused on tool access and ignoring the organizational design questions that determine whether augmented work actually improves outcomes. Who owns AI use in each function? How do teams evaluate AI-generated work before it moves forward? What does quality look like when AI is in the loop?

These are governance questions. And they're leadership questions, not just IT questions.

Evaila lens: Our AI Readiness Assessment explicitly surfaces these gaps before organizations invest in broad rollout. Understanding where the organizational conditions are and aren't in place is what separates a successful augmentation strategy from a well-intentioned experiment.

What to Do With This

If the data confirms that augmentation is the dominant pattern, the strategic question becomes: are you building for it?

That means investing in the skills that make augmentation valuable: critical evaluation, clear problem framing, iterative working habits. It means sequencing adoption where expertise is deepest, then scaling the model. It means putting governance in place so that AI-augmented work is accountable, not just faster.

The organizations that will compound the most value from AI in the next two to three years are not the ones with the most tools. They're the ones where people know how to use AI well, and where the organizational structure supports and evaluates that use.

If you're not sure where your organization stands, our AI Readiness Assessment will tell you, and our AI Adoption Plan will show you what to do about it.

Demystifying AI. Delivering Results.